SLM vs LLM: A Practical Guide for Enterprises Adopting Generative AI

Now that we know what the difference is, let’s get to the real question: What are Small Language Models (SLM) and Large Language Models (LLM), and how do they fit into the AI ecosystem? This blog talks about the main differences between SLM vs LLM, how they can be used, and how businesses can choose the best model for their needs.

Dealing with Data Privacy Risks? Implement Secure, Governance-First AI Architecture Today

“The Large Language Model Market is estimated to be worth USD 6.4 billion in 2024 and is projected to reach USD 36.1 billion by 2030 at a CAGR of 33.2% during the forecast period. It has been significantly influenced by advancements in NLP, particularly through technologies like transformer-based architectures such as BERT, GPT, and their variants. These models have revolutionized various sectors, including language translation, content generation, and conversational AI.”– Global News Wire

What Are Small Language Models? A Quick Overview

AI technologies of this nature were developed for those seeking to identify more resource-efficient solutions capable of comprehending human language and producing detailed responses. Their distinction from LLMs is in their training on a more restricted dataset. Moreover, they utilize fewer parameters than LLMs and are founded on a more compact network design.

Upon deeper examination of small language models compared to large language models, it becomes evident that the former are developed utilizing statistical methodologies. They often utilize constrained neural networks, resulting in enhanced efficiency. Nevertheless, they demonstrate inferior performance compared to LLMs in addressing complex tasks. They are utilized as a more resource-efficient alternative in scenarios where a corporation lacks substantial computational resources. SLMs are ideal for basic language processing, elucidating its growing adoption by budget-constrained enterprises.

Key Benefits of Small Language Models for Modern AI Systems

- Data Security: SLMs reduce the risk of data leakage, which is crucial for businesses handling sensitive data, because they can be implemented locally without depending on external APIs.

- Real-Time Responsiveness: SLMs are perfect for real-time applications like live chat support and robotic process automation (RPA) because of their quicker inferencing capabilities.

- Resource Efficiency: SLMs work well in settings like offline apps or mobile devices where hardware capacity is constrained.

How Training Datasets Shape SLMs

SLMs work well because of their manageable parameter sizes and targeted training datasets. Relevance and specificity are given precedence over size in these models.

- To ensure their applicability to certain tasks, SLMs are frequently trained using domain-specific datasets. Texts from specialist fields, such as financial reports, technical manuals, medical records, or customer service exchanges, are included in these meticulously curated databases. SLMs can gain a thorough grasp of the precise language, vocabulary, and context needed for their target applications by concentrating on domain-specific data. The models can function precisely and efficiently in their specialized fields because of this focused training, producing outputs that are dependable and suitable for the given context.

Where Small Language Models Deliver the Greatest Impact

SLMs are a suitable option for applications that require stringent data bounds, speed, and efficiency. They are frequently used in settings that require dependable performance without the expense and administrative burden of large-scale infrastructure.

- Edge & On-Premises Deployments: SLMs can operate in secure settings with limited infrastructure costs or when data must stay on-premises because of their minimized footprint.

- Domain-Specific Summarization: They can accurately summarize text inside a specific domain by digesting legal contracts, compliance requirements, or medical notes.

- High-Volume, Repetitive Interactions: They can effectively handle millions of routine requests, such as classifying support tickets or ranking search results.

- Customer Service Automation: Task-specific chatbots that respond to frequently asked questions, manage billing inquiries, or deliver product information with minimal latency can be powered by SLM.

What Are Large Language Models? A Strategic Overview for Business Leaders

Large Language Models (LLMs) have become a key component of natural language processing, allowing for several uses, including chatbots and content creation. These AI frameworks were designed to comprehend customer inquiries and reply in a voice that sounded human. To evaluate and generate text utilizing natural semantic patterns, they employ deep learning technology. The encoder and decoder components make up the complex architecture upon which LLMs are built. They are instructed to separate data into tokens and identify connections among the pieces.

We have listed the basic ideas of these models below:

- Deep Learning Architecture: Numerical representations of phrases are generated. When creating material, LLMs weigh their relative importance.

- Machine Learning: Developers create models with a variety of factors that represent the most accurate forecasts using algorithms. During the first training phase, LLMs learn the ins and outs of mimicking natural language, enabling them to generate human-like words that are best used in a particular situation.

Check Our Blog On: The Ultimate Comparison of Machine Learning vs Deep Learning

Strategic Advantages of Large Language Models for Enterprises

- Versatility: Without requiring a lot of retraining, these models can handle anything from simple writing assignments to complex problem-solving.

- Creativity: LLMs can provide extremely innovative outputs, such as unique stories or in-depth reports, because of larger training datasets.

- Comprehensive Knowledge: LLMs perform exceptionally well on activities that call for generalized or broad language comprehension.

How Training Datasets Shape LLMs

The quantity and quality of training datasets are key factors in LLM efficacy. These models can comprehend context and produce coherent content by processing a wide range of texts, including books, articles, and webpages.

- The models’ capacity to comprehend and produce text across a range of domains is improved by this variety, which ensures that they are exposed to various writing styles, settings, and subjects. Diverse datasets are essential for the models to understand the subtleties of human language and generate outputs that are pertinent to the context.

Where Large Language Models Deliver the Greatest Impact

When businesses require original content creation, cross-domain reasoning, or context-rich responses, LLMs are most useful. Their advanced reasoning and knowledge make them appropriate for roles requiring much information and strategy.

- Complex Query Understanding: LLMs are proficient at understanding complex or unclear questions in financial analysis or medical diagnosis.

- Knowledge Management & Research: R&D teams and enterprise knowledge portals can benefit from LLMs’ ability to acquire and synthesize information from several knowledge bases.

- Cross-Functional Applications: Without having to create several specific models, they can be used in HR, sales, and operations due to their general-purpose design.

- Creative Content Generation: With great fluency and nuance, they can write campaign messages, product descriptions, and marketing copy.

Improve Contextual Accuracy, Fine-Tune Domain-Specific SLMs, or Scale Advanced LLM Capabilities

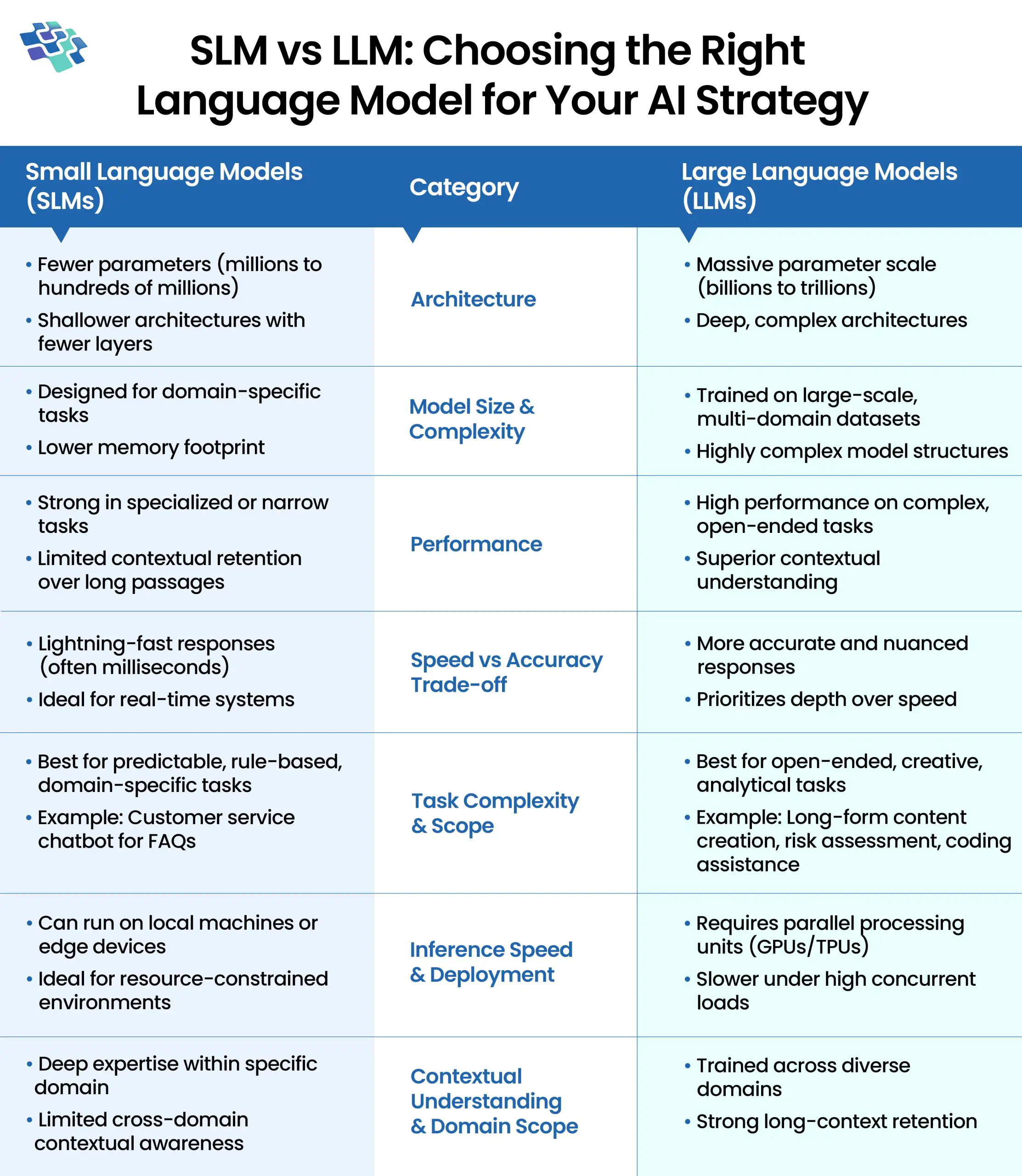

SLM vs LLM: Architecture, Accuracy & ROI Compared

Let’s take a closer look at the key differences in the SLM vs LLM landscape through a structured, side-by-side comparison. Below, we break down architecture, performance, scalability, and real-world applications to clearly highlight how each model type serves distinct business and technical needs.

1. SLM vs LLM: Architecture

1. SLM vs LLM: Architecture

Small Language Models (SLMs): SLMs have a smaller number of parameters, often between a few million and a few hundred million. Compared to LLMs, they need fewer resources and are lighter due to their smaller size.

SLMs’ key features include:

- Lower Computational Requirements

- Focused Training Data

- Shallower Architectures

Large Language Models (LLMs): The enormous number of parameters, frequently in the billions, that define LLMs. For example, OpenAI’s GPT-4 or GPT-3 models, as well as Google’s BERT, have so many parameters that they take a significant amount of processing power to train and implement. Due to their size, these models can capture a wide variety of language nuances and patterns.

LLMs’ key features include:

- Deep Architectures

- Extensive Training Data

- High Computational Requirements

2. SLM vs LLM: Performance

Small Language Models (SLMs): Even though SLMs might not be as good as LLMs at handling extremely complicated tasks, they can excel in some fields or applications where the training data is extremely pertinent. For real-time applications or systems with limited resources, SLMs’ reduced size allows them to process requests and produce results more quickly.

Large Language Models (LLMs): The ability of LLMs to comprehend and produce complicated language makes them ideal for a variety of activities, including the development of intricate content, complex question-answering, and subtle text generation. Because of their size, they can retain context throughout lengthy text passages, which enhances their performance on activities requiring in-depth contextual knowledge. LLMs’ rich parameterization and wide training data enable them to handle a wide range of applications, from summarization and translation to creative writing and coding support.

3. SLM vs LLM: Speed vs. Accuracy Trade-offs

Small Language Models (SLMs): SLMs respond incredibly quickly, frequently in milliseconds. They are therefore perfect for real-time uses such as instant language translation in messaging apps or live chat support. However, in intricate situations, they might give up some precision or subtlety.

Large Language Models (LLMs): LLMs offer more detailed and precise answers, but at the cost of speed. They work well for tasks like creating lengthy content or performing in-depth data analysis, where response time isn’t crucial. If a financial organization were to utilize an LLM for risk assessment, accuracy would take precedence over speed.

4. SLM vs LLM: Task Complexity & Scope

Small Language Models (SLMs): Small Language Models (SLMs) are particularly good at specific, limited tasks. A customer care chatbot that responds to frequently asked questions concerning return policy or product details can make effective use of an SLM. For predictable queries, these models offer prompt, clear answers.

Large Language Models (LLMs): For complicated, open-ended activities, large language models (LLMs) are the recommended option. LLMs’ extensive knowledge base and contextual awareness are beneficial for content production, in-depth analysis, and creative writing.

5. SLM vs LLM: Model Size & Complexity

Small Language Models (SLMs): They are trained on smaller and more domain-specific datasets since smaller models are designed for domain-specific tasks. Between millions and tens of millions of parameters are used.

Large Language Models (LLMs): Larger models provide better comprehension and performance on challenging tasks since they are trained on larger and more diverse datasets. Larger models have between billions and trillions of parameters.

6. SLM vs LLM: Inference Speed

Small Language Models (SLMs): Small models can run on local machines or edge devices, generating responses within acceptable timeframes while requiring significantly less computational power and infrastructure investment.

Large Language Models (LLMs): Large models require multiple parallel processing units and substantial computational infrastructure to generate outputs efficiently, especially under high user loads, which can increase latency and operational costs during inference.

7. SLM vs LLM: Contextual Understanding & Domain Specificity

Small Language Models (SLMs): SLMs are typically trained on domain-specific datasets, enabling them to develop deep expertise within a focused area. While they may lack broad, cross-domain contextual awareness, they excel in specialized tasks where precision and relevance matter most. Their targeted training makes them highly efficient for industry-specific applications and controlled environments.

Large Language Models (LLMs): LLMs are trained on vast, diverse datasets spanning multiple domains, aiming to approximate broader human-like understanding. This extensive exposure allows them to perform well across varied topics and complex scenarios. Their versatility makes them adaptable for downstream tasks such as programming, advanced analytics, creative writing, and multi-domain problem solving.

Solve Long-Context Limitations, Engineer Retrieval-Augmented Generation for Higher Precision Output

Integrating SLMs and LLMs for Smarter AI Systems

An award-winning intelligent automation provider needed a robust solution to help its client extract more than 350 fields from lease-related documents arriving in varying formats, qualities, languages, and structures. After testing multiple technology combinations across five approaches, the team identified a superior strategy: integrating SLMs and LLMs for smarter AI systems.

By combining large language models for advanced reasoning and contextual understanding with small language models optimized for structured extraction tasks, the solution delivered a seamless and consolidated user experience, even before extensive field-level training. Intelligent document processing (IDP) handled classification and segmentation, while generative AI enhanced complex field extraction. Robotic process automation (RPA) streamlined downstream data entry.

This hybrid architecture achieved an 82% accuracy rate. For fields below the confidence threshold, a human-in-the-loop review process ensured validation and correction. Over time, machine learning models learned from human feedback, continuously improving performance and reducing manual intervention, demonstrating the power of a well-orchestrated SLM–LLM integration strategy.

SLMs vs LLMs: How to Choose the Right Model for Your Needs

The intricacy of the task, financial limitations, and the infrastructure that is available all influence the choice of language model. You can decide if an LLM vs SLM is a better fit for your needs by being aware of these factors. Let’s dissect the main ideas to help you make a choice.

- Infrastructure: High-performance computer environments, like robust GPUs and cloud-based platforms, are frequently needed for LLMs. SLMs can operate effectively in edge environments or local devices, which lowers the load on infrastructure.

- Application Requirements: Complex activities requiring deep reasoning, contextual awareness, and creative invention are best suited for LLMs. SLMs do better on focused, low-effort work with well-defined goals.

- Budget: Large sums of money are usually needed for training, deployment, and continuing maintenance when implementing LLMs. SLMs are appropriate for companies with smaller budgets because they provide a more affordable option.

Finding the Right Balance: SLM or LLM for Your Needs

Choosing between Small Language Models (SLMs) and Large Language Models (LLMs) depends on your business goals, technical requirements, and infrastructure readiness.

SLMs are lightweight, cost-effective, and faster to deploy, making them ideal for domain-specific applications, real-time processing, and organizations operating with limited computational resources. While efficient, they may face limitations in advanced reasoning and long-context understanding.

LLMs, on the other hand, deliver superior performance across complex, open-ended, and multi-domain tasks. Their broader contextual intelligence and adaptability make them well-suited for enterprise automation, analytics, content generation, and strategic decision-making, though they demand higher investment and infrastructure.

The right model should align with your use case, scalability needs, and ROI expectations. As an enterprise AI software development company, NextGen Invent builds bespoke SLM and LLM solutions that integrate seamlessly into existing ecosystems, ensuring performance optimization, scalability, governance alignment, and measurable business outcomes that drive long-term competitive advantage and innovation.

Frequently Asked Questions About SLM vs LLM

Related Blogs

AWS vs Azure Comparison: Which Cloud Platform Is Better for Your Enterprise in 2026

AWS and Azure are the two dominant global cloud service providers, with AWS holding 32% of the market, followed closely by Azure at 23%. In the digital age, these platforms allow businesses to quickly innovate...

Databricks Benefits: Building the Foundation for Next-Gen Business Intelligence

Databricks benefits stand out through its Lakehouse architecture, which unifies the strengths of traditional data warehouses and modern data lakes to deliver a holistic, future-ready data platform.

Data Warehouse in Microsoft Fabric: Powering the Next Generation of Unified Analytics

Designed specifically for SQL Server professionals, the Data Warehouse in Microsoft Fabric offers a familiar T-SQL–based development experience that closely resembles on-premises workflows.

Stay In the Know

Get Latest updates and industry insights every month.

1. SLM vs LLM: Architecture

1. SLM vs LLM: Architecture